Difference between revisions of "2014:Audio Music Similarity and Retrieval Results"

(Created page with "== Introduction == These are the results for the 2013 running of the Audio Music Similarity and Retrieval task set. For background information about this task set please refer to...") |

(→Reports) |

||

| (3 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

== Introduction == | == Introduction == | ||

| − | These are the results for the | + | These are the results for the 2014 running of the Audio Music Similarity and Retrieval task set. For background information about this task set please refer to the Audio Music Similarity and Retrieval page. |

Each system was given 7000 songs chosen from IMIRSEL's "uspop", "uscrap" and "american" "classical" and "sundry" collections. Each system then returned a 7000x7000 distance matrix. 50 songs were randomly selected from the 10 genre groups (5 per genre) as queries and the first 5 most highly ranked songs out of the 7000 were extracted for each query (after filtering out the query itself, returned results from the same artist were also omitted). Then, for each query, the returned results (candidates) from all participants were grouped and were evaluated by human graders using the Evalutron 6000 grading system. Each individual query/candidate set was evaluated by a single grader. For each query/candidate pair, graders provided two scores. Graders were asked to provide 1 categorical '''BROAD''' score with 3 categories: NS,SS,VS as explained below, and one '''FINE''' score (in the range from 0 to 100). A description and analysis is provided below. | Each system was given 7000 songs chosen from IMIRSEL's "uspop", "uscrap" and "american" "classical" and "sundry" collections. Each system then returned a 7000x7000 distance matrix. 50 songs were randomly selected from the 10 genre groups (5 per genre) as queries and the first 5 most highly ranked songs out of the 7000 were extracted for each query (after filtering out the query itself, returned results from the same artist were also omitted). Then, for each query, the returned results (candidates) from all participants were grouped and were evaluated by human graders using the Evalutron 6000 grading system. Each individual query/candidate set was evaluated by a single grader. For each query/candidate pair, graders provided two scores. Graders were asked to provide 1 categorical '''BROAD''' score with 3 categories: NS,SS,VS as explained below, and one '''FINE''' score (in the range from 0 to 100). A description and analysis is provided below. | ||

| Line 18: | Line 18: | ||

! width="440" | Contributors | ! width="440" | Contributors | ||

|- | |- | ||

| − | ! | + | ! FFMD1 |

| − | | | + | | FFMD || style="text-align: center;" | [https://www.music-ir.org/mirex/abstracts/2014/FFMD1.pdf PDF] || [http://www.eecs.qmul.ac.uk/~peterf/ Peter Foster], [http://c4dm.eecs.qmul.ac.uk/people/gyorgyf.htm György Fazekas], [http://www.eecs.qmul.ac.uk/people/view/2932/dr-matthias-mauch Matthias Mauch], [http://www.eecs.qmul.ac.uk/~simond/ Simon Dixon] |

|- | |- | ||

| − | ! | + | ! GK1 |

| − | | | + | | ILSP AMS || style="text-align: center;" | [https://www.music-ir.org/mirex/abstracts/2014/GK1.pdf PDF] || [http://www.ilsp.gr/en/infoprojects/meta?view=member&task=show&id=20 Aggelos Gkiokas], [http://www.ilsp.gr/en/research/publications?view=member&id=33&task=show Vassilis Katsouros], [http://www.ilsp.gr/homepages/carayannis_eng.html George Carayannis] |

|- | |- | ||

| − | ! | + | ! GKC2 |

| − | | ILSP | + | | ILSP AMS 2 || style="text-align: center;" | [https://www.music-ir.org/mirex/abstracts/2014/GKC2.pdf PDF] || [http://www.ilsp.gr/en/infoprojects/meta?view=member&task=show&id=20 Aggelos Gkiokas], [http://www.ilsp.gr/en/research/publications?view=member&id=33&task=show Vassilis Katsouros], [http://www.ilsp.gr/homepages/carayannis_eng.html George Carayannis] |

|- | |- | ||

| − | ! | + | ! GKC3 |

| − | | ILSP | + | | ILSP AMS 3 || style="text-align: center;" | [https://www.music-ir.org/mirex/abstracts/2014/GKC3.pdf PDF] || [http://www.ilsp.gr/en/infoprojects/meta?view=member&task=show&id=20 Aggelos Gkiokas], [http://www.ilsp.gr/en/research/publications?view=member&id=33&task=show Vassilis Katsouros], [http://www.ilsp.gr/homepages/carayannis_eng.html George Carayannis] |

|- | |- | ||

! PS1 | ! PS1 | ||

| PS09 || style="text-align: center;" | [https://www.music-ir.org/mirex/abstracts/2011/PS1.pdf PDF] || [http://www.ofai.at/~dominik.schnitzer Dominik Schnitzer], [http://www.cp.jku.at/ Tim Pohle] | | PS09 || style="text-align: center;" | [https://www.music-ir.org/mirex/abstracts/2011/PS1.pdf PDF] || [http://www.ofai.at/~dominik.schnitzer Dominik Schnitzer], [http://www.cp.jku.at/ Tim Pohle] | ||

|- | |- | ||

| − | ! | + | ! SS2 |

| − | | | + | | cbmr_sim_2013_resubmission || style="text-align: center;" | [https://www.music-ir.org/mirex/abstracts/2014/SS2.pdf PDF] || [http://www.seyerlehner.info Klaus Seyerlehner], [http://www.cp.jku.at Markus Schedl] |

|- | |- | ||

| − | ! | + | ! SS4 |

| − | | | + | | cbmr_sim_2014_submission || style="text-align: center;" | [https://www.music-ir.org/mirex/abstracts/2014/SS4.pdf PDF] || [http://www.seyerlehner.info Klaus Seyerlehner], [http://www.cp.jku.at Markus Schedl] |

|- | |- | ||

| − | ! | + | ! SS5 |

| − | | | + | | AM-ES || style="text-align: center;" | [https://www.music-ir.org/mirex/abstracts/2014/SS5.pdf PDF] || Abhinava Srivastava, Mohit Sinha |

|- | |- | ||

|} | |} | ||

| Line 56: | Line 56: | ||

===Overall Summary Results=== | ===Overall Summary Results=== | ||

| − | <csv p=3> | + | <csv p=3>2014/ams/2014_summary_evalutron.csv</csv> |

===Friedman's Tests=== | ===Friedman's Tests=== | ||

| Line 63: | Line 63: | ||

Command: [c,m,h,gnames] = multcompare(stats, 'ctype', 'tukey-kramer','estimate', 'friedman', 'alpha', 0.05); | Command: [c,m,h,gnames] = multcompare(stats, 'ctype', 'tukey-kramer','estimate', 'friedman', 'alpha', 0.05); | ||

| − | <csv p=3> | + | <csv p=3>2014/ams/2014_evalutron.fine.friedman.tukeyKramerHSD.csv</csv> |

| − | [[File: | + | [[File:2014_evalutron.fine.friedman.tukeyKramerHSD.png|500px]] |

====Friedman's Test (BROAD Scores)==== | ====Friedman's Test (BROAD Scores)==== | ||

| Line 71: | Line 71: | ||

Command: [c,m,h,gnames] = multcompare(stats, 'ctype', 'tukey-kramer','estimate', 'friedman', 'alpha', 0.05); | Command: [c,m,h,gnames] = multcompare(stats, 'ctype', 'tukey-kramer','estimate', 'friedman', 'alpha', 0.05); | ||

| − | <csv p=3> | + | <csv p=3>2014/ams/2014_evalutron.cat.friedman.tukeyKramerHSD.csv</csv> |

| − | [[File: | + | [[File:2014_evalutron.cat.friedman.tukeyKramerHSD.png|500px]] |

===Summary Results by Query=== | ===Summary Results by Query=== | ||

| Line 79: | Line 79: | ||

These are the mean FINE scores per query assigned by Evalutron graders. The FINE scores for the 5 candidates returned per algorithm, per query, have been averaged. Values are bounded between 0 and 100. A perfect score would be 100. Genre labels have been included for reference. | These are the mean FINE scores per query assigned by Evalutron graders. The FINE scores for the 5 candidates returned per algorithm, per query, have been averaged. Values are bounded between 0 and 100. A perfect score would be 100. Genre labels have been included for reference. | ||

| − | <csv p=1> | + | <csv p=1>2014/ams/2014_fine_scores.csv</csv> |

====BROAD Scores==== | ====BROAD Scores==== | ||

These are the mean BROAD scores per query assigned by Evalutron graders. The BROAD scores for the 5 candidates returned per algorithm, per query, have been averaged. Values are bounded between 0 (not similar) and 2 (very similar). A perfect score would be 2. Genre labels have been included for reference. | These are the mean BROAD scores per query assigned by Evalutron graders. The BROAD scores for the 5 candidates returned per algorithm, per query, have been averaged. Values are bounded between 0 (not similar) and 2 (very similar). A perfect score would be 2. Genre labels have been included for reference. | ||

| − | <csv p=1> | + | <csv p=1>2014/ams/2014_cat_scores.csv</csv> |

===Raw Scores=== | ===Raw Scores=== | ||

| − | The raw data derived from the Evalutron 6000 human evaluations are located on the [[ | + | The raw data derived from the Evalutron 6000 human evaluations are located on the [[2014:Audio Music Similarity and Retrieval Raw Data]] page. |

==Metadata and Distance Space Evaluation== | ==Metadata and Distance Space Evaluation== | ||

| Line 98: | Line 98: | ||

=== Reports === | === Reports === | ||

| − | ''' | + | '''FFMD1''' = [https://music-ir.org/mirex/results/2014/ams/statsReports/FFMD1/report.txt Peter Foster, György Fazekas, Matthias Mauch, Simon Dixon]<br /> |

| − | ''' | + | '''GK1''' = [https://music-ir.org/mirex/results/2014/ams/statsReports/GK1/report.txt Aggelos Gkiokas, Vassilis Katsouros, George Carayannis]<br /> |

| − | ''' | + | '''GKC2''' = [https://music-ir.org/mirex/results/2014/ams/statsReports/GKC2/report.txt Aggelos Gkiokas, Vassilis Katsouros, George Carayannis]<br /> |

| − | ''' | + | '''GKC3''' = [https://music-ir.org/mirex/results/2014/ams/statsReports/GKC3/report.txt Aggelos Gkiokas, Vassilis Katsouros, George Carayannis]<br /> |

| − | '''PS1''' = [https://music-ir.org/mirex/results/ | + | '''PS1''' = [https://music-ir.org/mirex/results/2014/ams/statsReports/PS1/report.txt Tim Pohle, Dominik Schnitzer]<br /> |

| − | ''' | + | '''SS2''' = [https://music-ir.org/mirex/results/2014/ams/statsReports/SS2/report.txt Klaus Seyerlehner, Markus Schedl]<br /> |

| − | ''' | + | '''SS4''' = [https://music-ir.org/mirex/results/2014/ams/statsReports/SS4/report.txt Klaus Seyerlehner, Markus Schedl]<br /> |

| − | ''' | + | '''SS5''' = [https://music-ir.org/mirex/results/2014/ams/statsReports/SS5/report.txt Abhinava Srivastava, Mohit Sinha]<br /> |

Latest revision as of 22:55, 4 February 2015

Introduction

These are the results for the 2014 running of the Audio Music Similarity and Retrieval task set. For background information about this task set please refer to the Audio Music Similarity and Retrieval page.

Each system was given 7000 songs chosen from IMIRSEL's "uspop", "uscrap" and "american" "classical" and "sundry" collections. Each system then returned a 7000x7000 distance matrix. 50 songs were randomly selected from the 10 genre groups (5 per genre) as queries and the first 5 most highly ranked songs out of the 7000 were extracted for each query (after filtering out the query itself, returned results from the same artist were also omitted). Then, for each query, the returned results (candidates) from all participants were grouped and were evaluated by human graders using the Evalutron 6000 grading system. Each individual query/candidate set was evaluated by a single grader. For each query/candidate pair, graders provided two scores. Graders were asked to provide 1 categorical BROAD score with 3 categories: NS,SS,VS as explained below, and one FINE score (in the range from 0 to 100). A description and analysis is provided below.

The systems read in 30 second audio clips as their raw data. The same 30 second clips were used in the grading stage.

General Legend

Team ID

| Sub code | Submission name | Abstract | Contributors |

|---|---|---|---|

| FFMD1 | FFMD | Peter Foster, György Fazekas, Matthias Mauch, Simon Dixon | |

| GK1 | ILSP AMS | Aggelos Gkiokas, Vassilis Katsouros, George Carayannis | |

| GKC2 | ILSP AMS 2 | Aggelos Gkiokas, Vassilis Katsouros, George Carayannis | |

| GKC3 | ILSP AMS 3 | Aggelos Gkiokas, Vassilis Katsouros, George Carayannis | |

| PS1 | PS09 | Dominik Schnitzer, Tim Pohle | |

| SS2 | cbmr_sim_2013_resubmission | Klaus Seyerlehner, Markus Schedl | |

| SS4 | cbmr_sim_2014_submission | Klaus Seyerlehner, Markus Schedl | |

| SS5 | AM-ES | Abhinava Srivastava, Mohit Sinha |

Broad Categories

NS = Not Similar

SS = Somewhat Similar

VS = Very Similar

Understanding Summary Measures

Fine = Has a range from 0 (failure) to 100 (perfection).

Broad = Has a range from 0 (failure) to 2 (perfection) as each query/candidate pair is scored with either NS=0, SS=1 or VS=2.

Human Evaluation

Overall Summary Results

| Measure | FFMD1 | GK1 | GKC2 | GKC3 | PS1 | SS2 | SS4 | SS5 |

|---|---|---|---|---|---|---|---|---|

| Average Fine Score | 38.164 | 35.290 | 35.334 | 34.556 | 48.880 | 49.352 | 49.008 | 45.082 |

| Average Cat Score | 0.732 | 0.634 | 0.632 | 0.614 | 1.014 | 1.014 | 1.020 | 0.920 |

Friedman's Tests

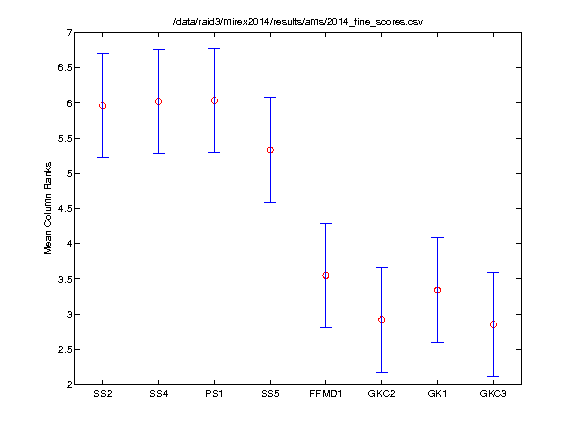

Friedman's Test (FINE Scores)

The Friedman test was run in MATLAB against the Fine summary data over the 50 queries.

Command: [c,m,h,gnames] = multcompare(stats, 'ctype', 'tukey-kramer','estimate', 'friedman', 'alpha', 0.05);

| TeamID | TeamID | Lowerbound | Mean | Upperbound | Significance |

|---|---|---|---|---|---|

| SS2 | SS4 | -1.543 | -0.060 | 1.423 | FALSE |

| SS2 | PS1 | -1.553 | -0.070 | 1.413 | FALSE |

| SS2 | SS5 | -0.853 | 0.630 | 2.113 | FALSE |

| SS2 | FFMD1 | 0.927 | 2.410 | 3.893 | TRUE |

| SS2 | GKC2 | 1.557 | 3.040 | 4.523 | TRUE |

| SS2 | GK1 | 1.137 | 2.620 | 4.103 | TRUE |

| SS2 | GKC3 | 1.627 | 3.110 | 4.593 | TRUE |

| SS4 | PS1 | -1.493 | -0.010 | 1.473 | FALSE |

| SS4 | SS5 | -0.793 | 0.690 | 2.173 | FALSE |

| SS4 | FFMD1 | 0.987 | 2.470 | 3.953 | TRUE |

| SS4 | GKC2 | 1.617 | 3.100 | 4.583 | TRUE |

| SS4 | GK1 | 1.197 | 2.680 | 4.163 | TRUE |

| SS4 | GKC3 | 1.687 | 3.170 | 4.653 | TRUE |

| PS1 | SS5 | -0.783 | 0.700 | 2.183 | FALSE |

| PS1 | FFMD1 | 0.997 | 2.480 | 3.963 | TRUE |

| PS1 | GKC2 | 1.627 | 3.110 | 4.593 | TRUE |

| PS1 | GK1 | 1.207 | 2.690 | 4.173 | TRUE |

| PS1 | GKC3 | 1.697 | 3.180 | 4.663 | TRUE |

| SS5 | FFMD1 | 0.297 | 1.780 | 3.263 | TRUE |

| SS5 | GKC2 | 0.927 | 2.410 | 3.893 | TRUE |

| SS5 | GK1 | 0.507 | 1.990 | 3.473 | TRUE |

| SS5 | GKC3 | 0.997 | 2.480 | 3.963 | TRUE |

| FFMD1 | GKC2 | -0.853 | 0.630 | 2.113 | FALSE |

| FFMD1 | GK1 | -1.273 | 0.210 | 1.693 | FALSE |

| FFMD1 | GKC3 | -0.783 | 0.700 | 2.183 | FALSE |

| GKC2 | GK1 | -1.903 | -0.420 | 1.063 | FALSE |

| GKC2 | GKC3 | -1.413 | 0.070 | 1.553 | FALSE |

| GK1 | GKC3 | -0.993 | 0.490 | 1.973 | FALSE |

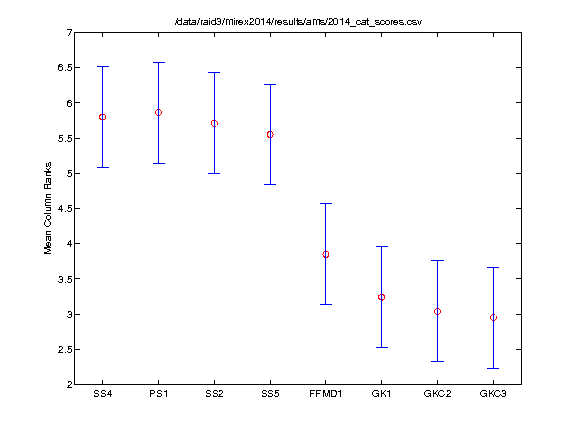

Friedman's Test (BROAD Scores)

The Friedman test was run in MATLAB against the BROAD summary data over the 50 queries.

Command: [c,m,h,gnames] = multcompare(stats, 'ctype', 'tukey-kramer','estimate', 'friedman', 'alpha', 0.05);

| TeamID | TeamID | Lowerbound | Mean | Upperbound | Significance |

|---|---|---|---|---|---|

| SS4 | PS1 | -1.492 | -0.060 | 1.372 | FALSE |

| SS4 | SS2 | -1.342 | 0.090 | 1.522 | FALSE |

| SS4 | SS5 | -1.182 | 0.250 | 1.682 | FALSE |

| SS4 | FFMD1 | 0.518 | 1.950 | 3.382 | TRUE |

| SS4 | GK1 | 1.128 | 2.560 | 3.992 | TRUE |

| SS4 | GKC2 | 1.328 | 2.760 | 4.192 | TRUE |

| SS4 | GKC3 | 1.418 | 2.850 | 4.282 | TRUE |

| PS1 | SS2 | -1.282 | 0.150 | 1.582 | FALSE |

| PS1 | SS5 | -1.122 | 0.310 | 1.742 | FALSE |

| PS1 | FFMD1 | 0.578 | 2.010 | 3.442 | TRUE |

| PS1 | GK1 | 1.188 | 2.620 | 4.052 | TRUE |

| PS1 | GKC2 | 1.388 | 2.820 | 4.252 | TRUE |

| PS1 | GKC3 | 1.478 | 2.910 | 4.342 | TRUE |

| SS2 | SS5 | -1.272 | 0.160 | 1.592 | FALSE |

| SS2 | FFMD1 | 0.428 | 1.860 | 3.292 | TRUE |

| SS2 | GK1 | 1.038 | 2.470 | 3.902 | TRUE |

| SS2 | GKC2 | 1.238 | 2.670 | 4.102 | TRUE |

| SS2 | GKC3 | 1.328 | 2.760 | 4.192 | TRUE |

| SS5 | FFMD1 | 0.268 | 1.700 | 3.132 | TRUE |

| SS5 | GK1 | 0.878 | 2.310 | 3.742 | TRUE |

| SS5 | GKC2 | 1.078 | 2.510 | 3.942 | TRUE |

| SS5 | GKC3 | 1.168 | 2.600 | 4.032 | TRUE |

| FFMD1 | GK1 | -0.822 | 0.610 | 2.042 | FALSE |

| FFMD1 | GKC2 | -0.622 | 0.810 | 2.242 | FALSE |

| FFMD1 | GKC3 | -0.532 | 0.900 | 2.332 | FALSE |

| GK1 | GKC2 | -1.232 | 0.200 | 1.632 | FALSE |

| GK1 | GKC3 | -1.142 | 0.290 | 1.722 | FALSE |

| GKC2 | GKC3 | -1.342 | 0.090 | 1.522 | FALSE |

Summary Results by Query

FINE Scores

These are the mean FINE scores per query assigned by Evalutron graders. The FINE scores for the 5 candidates returned per algorithm, per query, have been averaged. Values are bounded between 0 and 100. A perfect score would be 100. Genre labels have been included for reference.

| Genre | Query | FFMD1 | GK1 | GKC2 | GKC3 | PS1 | SS2 | SS4 | SS5 |

|---|---|---|---|---|---|---|---|---|---|

| BAROQUE | d001397 | 56.7 | 25.7 | 18.1 | 5.8 | 62.5 | 70.2 | 67.3 | 65.9 |

| BAROQUE | d001459 | 37.7 | 2 | 23.4 | 32.2 | 85.7 | 89.2 | 93.3 | 88.7 |

| BAROQUE | d002624 | 67.4 | 54.7 | 49.4 | 40.6 | 85.9 | 84.6 | 83.8 | 83.8 |

| BAROQUE | d015486 | 66.2 | 73.1 | 66.4 | 65.1 | 82.3 | 81.2 | 81.5 | 82.5 |

| BAROQUE | d019963 | 73.9 | 69.3 | 68.9 | 66.1 | 85.4 | 81.1 | 83.1 | 84.4 |

| BLUES | e002382 | 48.7 | 44.7 | 41.7 | 40.5 | 41.0 | 49.0 | 47.4 | 44.0 |

| BLUES | e004484 | 59.4 | 59.6 | 52.7 | 53.7 | 68.9 | 59.4 | 69.0 | 59.4 |

| BLUES | e006191 | 63.1 | 45.0 | 44.6 | 40.1 | 62.8 | 65.9 | 68.3 | 62.9 |

| BLUES | e008309 | 31.5 | 36.8 | 39.6 | 39.6 | 56.1 | 43.1 | 40.0 | 51.7 |

| BLUES | e017534 | 42.5 | 45.5 | 45.6 | 45.1 | 55.2 | 52.4 | 52.7 | 43.9 |

| CLASSICAL | d002003 | 15.5 | 10.7 | 1 | 1 | 20.7 | 7.9 | 2.6 | 13.0 |

| CLASSICAL | d007289 | 91.2 | 44.3 | 67.6 | 52.8 | 95.8 | 95.5 | 96.2 | 95.9 |

| CLASSICAL | d011638 | 2.7 | 5.6 | 3.1 | 3.1 | 37.5 | 26.9 | 19.0 | 29.8 |

| CLASSICAL | d012026 | 1.6 | 11.2 | 12.8 | 13.5 | 21.9 | 22.3 | 25.2 | 20.1 |

| CLASSICAL | d016699 | 25.2 | 23.3 | 26.2 | 26.0 | 28.8 | 31.0 | 26.7 | 31.5 |

| COUNTRY | e001114 | 47.5 | 36.0 | 46.5 | 53.0 | 58.0 | 45.0 | 47.5 | 67.0 |

| COUNTRY | e008446 | 45.5 | 57.6 | 60.5 | 58.6 | 51.4 | 54.0 | 64.1 | 49.8 |

| COUNTRY | e009159 | 11.1 | 36.7 | 31.4 | 26.3 | 51.0 | 33.0 | 32.4 | 39.8 |

| COUNTRY | e016660 | 28.1 | 18.3 | 19.9 | 13.2 | 22.9 | 29.4 | 20.6 | 20.9 |

| COUNTRY | e018065 | 22.0 | 25.4 | 27.4 | 31.9 | 45.0 | 36.2 | 42.2 | 44.1 |

| EDANCE | a005631 | 35.9 | 22.6 | 30.1 | 26.6 | 54.1 | 44.8 | 39.5 | 41.2 |

| EDANCE | a005956 | 19.3 | 14.3 | 12.9 | 12.7 | 33.6 | 42.2 | 45.9 | 25.1 |

| EDANCE | b001277 | 15.0 | 34.9 | 28.8 | 37.2 | 29.5 | 50.0 | 43.8 | 14.5 |

| EDANCE | f001351 | 51.8 | 56.5 | 55.0 | 53.0 | 35.9 | 56.0 | 60.1 | 27.4 |

| EDANCE | f013763 | 53.2 | 25.1 | 30.0 | 26.1 | 32.6 | 45.4 | 47.6 | 35.7 |

| JAZZ | e004761 | 39.7 | 29.8 | 44.3 | 35.8 | 24.6 | 51.4 | 51.6 | 32.0 |

| JAZZ | e008457 | 31.1 | 23.3 | 30.7 | 35.0 | 51.6 | 61.1 | 53.3 | 40.7 |

| JAZZ | e017361 | 51.4 | 31.8 | 39.7 | 44.0 | 53.2 | 52.0 | 52.9 | 31.1 |

| JAZZ | e018523 | 5.8 | 21.4 | 21.8 | 21.8 | 18.4 | 37.2 | 37.6 | 32.5 |

| JAZZ | e018792 | 38.4 | 49.1 | 37.4 | 43.5 | 44.2 | 40.6 | 39.2 | 39.4 |

| METAL | a005951 | 24.7 | 44.0 | 46.7 | 46.6 | 54.0 | 30.5 | 31.4 | 37.3 |

| METAL | b001596 | 28.9 | 56.5 | 43.0 | 52.7 | 70.3 | 72.8 | 71.6 | 37.5 |

| METAL | b018086 | 16.6 | 27.8 | 25.4 | 26.3 | 32.0 | 24.0 | 21.6 | 28.0 |

| METAL | b018190 | 14.1 | 16.9 | 19.7 | 18.8 | 26.3 | 27.3 | 25.7 | 25.6 |

| METAL | b019401 | 9 | 7 | 6.6 | 10.5 | 5.9 | 11.6 | 10.1 | 15.8 |

| RAPHIPHOP | a000917 | 76.6 | 63.2 | 61.3 | 61.2 | 81.1 | 79.6 | 79.0 | 64.9 |

| RAPHIPHOP | a002493 | 72.5 | 74.5 | 58.5 | 70.5 | 74.5 | 77.0 | 77.5 | 78.0 |

| RAPHIPHOP | a004652 | 57.9 | 53.0 | 51.9 | 54.6 | 52.0 | 68.3 | 51.4 | 56.2 |

| RAPHIPHOP | a008454 | 4.9 | 9.7 | 14.7 | 12.2 | 21.3 | 9.6 | 10.7 | 21.2 |

| RAPHIPHOP | b019567 | 1.9 | 2.3 | 0.8 | 1 | 2.1 | 8 | 5 | 5.8 |

| ROCKROLL | b006054 | 32.9 | 25.5 | 21.9 | 24.1 | 35.2 | 30.2 | 32.1 | 26.5 |

| ROCKROLL | b011339 | 47.1 | 42.2 | 40.6 | 41.9 | 48.9 | 57.8 | 55.0 | 44.1 |

| ROCKROLL | b012144 | 45.8 | 38.6 | 41.1 | 42.6 | 49.2 | 40.9 | 47.5 | 45.1 |

| ROCKROLL | b016472 | 41.3 | 17.3 | 16.8 | 10.6 | 66.4 | 47.3 | 52.7 | 47.9 |

| ROCKROLL | b017328 | 47.0 | 35.0 | 34.0 | 33.0 | 54.5 | 57.5 | 52.0 | 56.0 |

| ROMANTIC | d001837 | 29.8 | 44.8 | 43.9 | 42.9 | 55.6 | 61.8 | 69.3 | 49.6 |

| ROMANTIC | d004789 | 24.4 | 6.5 | 7.3 | 6.3 | 23.8 | 22.0 | 24.3 | 25.9 |

| ROMANTIC | d009318 | 30.5 | 48.5 | 41.0 | 16.0 | 67.5 | 66.0 | 69.5 | 66.5 |

| ROMANTIC | d014202 | 53.8 | 47.9 | 48.3 | 47.9 | 56.7 | 70.4 | 62.9 | 54.7 |

| ROMANTIC | d015836 | 69.4 | 69.0 | 65.7 | 64.2 | 70.2 | 67.0 | 66.7 | 68.8 |

BROAD Scores

These are the mean BROAD scores per query assigned by Evalutron graders. The BROAD scores for the 5 candidates returned per algorithm, per query, have been averaged. Values are bounded between 0 (not similar) and 2 (very similar). A perfect score would be 2. Genre labels have been included for reference.

| Genre | Query | FFMD1 | GK1 | GKC2 | GKC3 | PS1 | SS2 | SS4 | SS5 |

|---|---|---|---|---|---|---|---|---|---|

| BAROQUE | d001397 | 1 | 0.4 | 0.3 | 0 | 1.2 | 1.2 | 1.2 | 1.3 |

| BAROQUE | d001459 | 0.7 | 0 | 0.4 | 0.6 | 1.8 | 2 | 2 | 1.9 |

| BAROQUE | d002624 | 1.3 | 0.7 | 0.6 | 0.3 | 1.8 | 1.8 | 1.7 | 1.7 |

| BAROQUE | d015486 | 1.3 | 1.6 | 1.4 | 1.3 | 2 | 2 | 2 | 2 |

| BAROQUE | d019963 | 2 | 1.7 | 1.7 | 1.6 | 2 | 2 | 2 | 2 |

| BLUES | e002382 | 0.9 | 0.9 | 0.9 | 0.7 | 0.7 | 0.9 | 0.9 | 1 |

| BLUES | e004484 | 1.4 | 1.1 | 1.1 | 1.1 | 1.8 | 1.4 | 1.7 | 1.4 |

| BLUES | e006191 | 1.4 | 0.8 | 0.8 | 0.6 | 1.3 | 1.5 | 1.6 | 1.3 |

| BLUES | e008309 | 0.6 | 0.7 | 0.8 | 0.8 | 1.4 | 1 | 0.8 | 1.1 |

| BLUES | e017534 | 0.8 | 0.9 | 0.9 | 0.9 | 1.1 | 1 | 1.1 | 0.8 |

| CLASSICAL | d002003 | 0.3 | 0.2 | 0 | 0 | 0.4 | 0.1 | 0 | 0.3 |

| CLASSICAL | d007289 | 2 | 0.8 | 1.4 | 1 | 2 | 2 | 2 | 2 |

| CLASSICAL | d011638 | 0.1 | 0.1 | 0.1 | 0.1 | 1 | 0.8 | 0.8 | 0.8 |

| CLASSICAL | d012026 | 0 | 0.4 | 0.3 | 0.4 | 0.8 | 0.7 | 0.8 | 0.8 |

| CLASSICAL | d016699 | 0.4 | 0.3 | 0.4 | 0.4 | 0.3 | 0.3 | 0.2 | 0.5 |

| COUNTRY | e001114 | 0.6 | 0.6 | 0.6 | 0.8 | 1.1 | 0.5 | 0.7 | 1.5 |

| COUNTRY | e008446 | 0.9 | 1.2 | 1.3 | 1.3 | 1 | 1.1 | 1.5 | 1.1 |

| COUNTRY | e009159 | 0 | 0.7 | 0.6 | 0.5 | 1.4 | 0.6 | 0.6 | 0.9 |

| COUNTRY | e016660 | 0.6 | 0.1 | 0.2 | 0.1 | 0.2 | 0.6 | 0.2 | 0.3 |

| COUNTRY | e018065 | 0.4 | 0.3 | 0.3 | 0.5 | 0.7 | 0.7 | 0.6 | 0.7 |

| EDANCE | a005631 | 0.6 | 0.2 | 0.5 | 0.4 | 1 | 0.8 | 0.7 | 0.8 |

| EDANCE | a005956 | 0.1 | 0.1 | 0 | 0 | 0.5 | 0.8 | 0.9 | 0.3 |

| EDANCE | b001277 | 0.1 | 0.6 | 0.5 | 0.6 | 0.3 | 1 | 1 | 0.2 |

| EDANCE | f001351 | 1 | 1.1 | 1.1 | 1.1 | 0.5 | 1 | 1.1 | 0.3 |

| EDANCE | f013763 | 1.1 | 0.5 | 0.6 | 0.6 | 0.5 | 0.9 | 0.9 | 0.7 |

| JAZZ | e004761 | 0.8 | 0.5 | 0.8 | 0.6 | 0.3 | 1.2 | 1.2 | 0.4 |

| JAZZ | e008457 | 0.8 | 0.5 | 0.8 | 1 | 1.5 | 1.4 | 1.4 | 1 |

| JAZZ | e017361 | 0.7 | 0.4 | 0.5 | 0.6 | 1 | 0.9 | 0.8 | 0.2 |

| JAZZ | e018523 | 0.2 | 0.7 | 0.6 | 0.6 | 0.5 | 1 | 1.1 | 0.9 |

| JAZZ | e018792 | 0.6 | 0.5 | 0.5 | 0.4 | 0.8 | 0.6 | 0.8 | 0.7 |

| METAL | a005951 | 0.3 | 0.7 | 0.9 | 0.8 | 1.3 | 0.3 | 0.4 | 0.6 |

| METAL | b001596 | 0.4 | 1.3 | 0.8 | 1.1 | 1.7 | 1.7 | 1.6 | 0.6 |

| METAL | b018086 | 0 | 0.5 | 0.4 | 0.4 | 0.5 | 0.3 | 0.2 | 0.4 |

| METAL | b018190 | 0.1 | 0.3 | 0.3 | 0.3 | 0.4 | 0.4 | 0.4 | 0.4 |

| METAL | b019401 | 0.1 | 0.1 | 0 | 0.1 | 0.1 | 0.2 | 0.2 | 0.3 |

| RAPHIPHOP | a000917 | 1.8 | 1.4 | 1.3 | 1.3 | 2 | 2 | 2 | 1.6 |

| RAPHIPHOP | a002493 | 1.5 | 1.5 | 1 | 1.5 | 1.7 | 1.6 | 1.5 | 1.7 |

| RAPHIPHOP | a004652 | 1.3 | 1 | 1 | 1 | 1.1 | 1.5 | 1.1 | 1.2 |

| RAPHIPHOP | a008454 | 0.1 | 0.3 | 0.4 | 0.3 | 0.5 | 0.1 | 0.2 | 0.5 |

| RAPHIPHOP | b019567 | 0.1 | 0 | 0 | 0 | 0 | 0.2 | 0.1 | 0.2 |

| ROCKROLL | b006054 | 0.6 | 0.4 | 0.3 | 0.4 | 0.7 | 0.4 | 0.4 | 0.6 |

| ROCKROLL | b011339 | 0.9 | 0.8 | 0.7 | 0.7 | 0.7 | 1.3 | 1.3 | 0.8 |

| ROCKROLL | b012144 | 0.6 | 0.2 | 0.3 | 0.3 | 0.7 | 0.3 | 0.6 | 0.5 |

| ROCKROLL | b016472 | 0.9 | 0.2 | 0.2 | 0.1 | 1.4 | 1.1 | 1.3 | 1 |

| ROCKROLL | b017328 | 0.7 | 0.4 | 0.3 | 0.3 | 1 | 1.2 | 0.8 | 1.1 |

| ROMANTIC | d001837 | 0.4 | 0.8 | 0.7 | 0.7 | 1.2 | 1.4 | 1.7 | 0.9 |

| ROMANTIC | d004789 | 0.8 | 0.2 | 0.2 | 0.2 | 1 | 0.8 | 0.8 | 1 |

| ROMANTIC | d009318 | 0.4 | 0.6 | 0.5 | 0 | 0.9 | 1 | 1.1 | 1.1 |

| ROMANTIC | d014202 | 1.2 | 0.9 | 0.9 | 0.9 | 1.2 | 1.6 | 1.4 | 1 |

| ROMANTIC | d015836 | 1.7 | 1.5 | 1.4 | 1.4 | 1.7 | 1.5 | 1.6 | 1.6 |

Raw Scores

The raw data derived from the Evalutron 6000 human evaluations are located on the 2014:Audio Music Similarity and Retrieval Raw Data page.

Metadata and Distance Space Evaluation

The following reports provide evaluation statistics based on analysis of the distance space and metadata matches and include:

- Neighbourhood clustering by artist, album and genre

- Artist-filtered genre clustering

- How often the triangular inequality holds

- Statistics on 'hubs' (tracks similar to many tracks) and orphans (tracks that are not similar to any other tracks at N results).

Reports

FFMD1 = Peter Foster, György Fazekas, Matthias Mauch, Simon Dixon

GK1 = Aggelos Gkiokas, Vassilis Katsouros, George Carayannis

GKC2 = Aggelos Gkiokas, Vassilis Katsouros, George Carayannis

GKC3 = Aggelos Gkiokas, Vassilis Katsouros, George Carayannis

PS1 = Tim Pohle, Dominik Schnitzer

SS2 = Klaus Seyerlehner, Markus Schedl

SS4 = Klaus Seyerlehner, Markus Schedl

SS5 = Abhinava Srivastava, Mohit Sinha