Difference between revisions of "2007:Audio Music Similarity and Retrieval Results"

(→Overall Summary Results) |

|||

| Line 46: | Line 46: | ||

===Overall Summary Results=== | ===Overall Summary Results=== | ||

| + | '''NB''': The results for KB1 were interpolated from partial data due to a runtime error. | ||

<csv>ams07_overall_summary2.csv</csv> | <csv>ams07_overall_summary2.csv</csv> | ||

Revision as of 19:48, 16 September 2007

Contents

Introduction

These are the results for the 2007 running of the Audio Music Similarity and Retrieval task set. For background information about this task set please refer to the Audio Music Similarity and Retrieval page.

Each system was given 5000 songs chosen from IMIRSEL's "uspop", "uscrap" and "american" "classical" and "sundry" collections. Each system then returned a 5000x5000 distance matrix. 100 songs were randomly selected as queries and the first 5 most highly ranked songs out of the 5000 were extracted for each query (after filtering out the query itself, returned results from the same artist and members of the cover song collection). Then, for each query, the returned results (candidates) from all participants were grouped and were evaluated by human graders using the Evalutron 6000 grading system. Each individual query/candidate set was evaluated by a single grader. For each query/candidate pair, graders provided two scores. Graders were asked to provide 1 categorical score with 3 categories: NS,SS,VS as explained below, and one fine score (in the range from 0 to 10). A description and analysis is provided below.

The systems read in 30 second audio clips as their raw data. The same 30 second clips were used in the grading stage.

Summary Data on Human Evaluations (Evalutron 6000)

Number of evaluators = 20

Number of evaluations per query/candidate pair = 1

Number of queries per grader = 5

Size of the candidate lists = 48.32

Number of randomly selected queries = 100

Number of query/candidate pairs graded = 4832

General Legend

Team ID

BK1 = Klaas Bosteels, Etienne E. Kerre 1

BK2 = Klaas Bosteels, Etienne E. Kerre 2

CB1 = Christoph Bastuck 1

CB2 = Christoph Bastuck 2

CB3 = Christoph Bastuck 3

GT = George Tzanetakis

LB = Luke Barrington, Douglas Turnball, David Torres, Gert Lanskriet

ME = Michael I. Mandel, Daniel P. W. Ellis

PC = Aliaksandr Paradzinets, Liming Chen

PS = Tim Pohle, Dominik Schnitzer

TL1 = Thomas Lidy, Andreas Rauber, Antonio Pertusa, José Manuel Iñesta 1

TL2 = Thomas Lidy, Andreas Rauber, Antonio Pertusa, José Manuel Iñesta 2

Broad Categories

NS = Not Similar

SS = Somewhat Similar

VS = Very Similar

Calculating Summary Measures

Fine(1) = Sum of fine-grained human similarity decisions (0-10).

PSum(1) = Sum of human broad similarity decisions: NS=0, SS=1, VS=2.

WCsum(1) = 'World Cup' scoring: NS=0, SS=1, VS=3 (rewards Very Similar).

SDsum(1) = 'Stephen Downie' scoring: NS=0, SS=1, VS=4 (strongly rewards Very Similar).

Greater0(1) = NS=0, SS=1, VS=1 (binary relevance judgement).

Greater1(1) = NS=0, SS=0, VS=1 (binary relevance judgement using only Very Similar).

(1)Normalized to the range 0 to 1.

Overall Summary Results

NB: The results for KB1 were interpolated from partial data due to a runtime error.

file /nema-raid/www/mirex/results/ams07_overall_summary2.csv not found

Audio Music Similarity and Retrieval Runtime Data

file /nema-raid/www/mirex/results/as06_runtime.csv not found

For a description of the computers the submission ran on see MIREX_2006_Equipment.

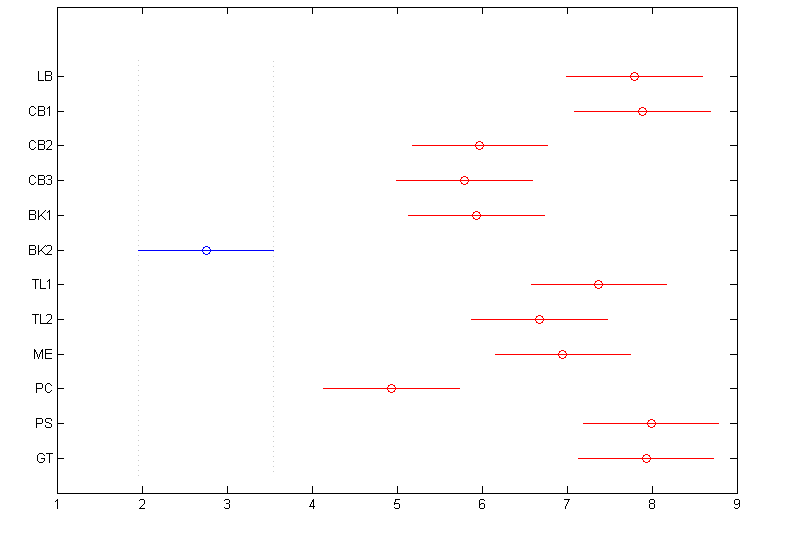

Friedman Test with Multiple Comparisons Results (p=0.05)

The Friedman test was run in MATLAB against the Fine summary data over the 100 queries.

Command: [c,m,h,gnames] = multcompare(stats, 'ctype', 'tukey-kramer','estimate', 'friedman', 'alpha', 0.05);

file /nema-raid/www/mirex/results/ams07_sum_friedman_fine.csv not found

file /nema-raid/www/mirex/results/ams07_detail_friedman_fine.csv not found

Summary Results by Query

These are the mean FINE scores per query assigned by Evalutron graders. The FINE scores for the 5 candidates returned per algorithm, per query, have been averaged. Values are bounded between 0.0 and 10.0. A perfect score would be 10. Genre labels have been included for reference.

file /nema-raid/www/mirex/results/ams07_fine_by_query_with_genre.csv not found

These are the mean BROAD scores per query assigned by Evalutron graders. The BROAD scores for the 5 candidates returned per algorithm, per query, have been averaged. Values are bounded between 0 (not similar) and 2 (very similar). A perfect score would be 2. Genre labels have been included for reference. file /nema-raid/www/mirex/results/ams07_broad_by_query_with_genre.csv not found

Raw Scores

The raw data derived from the Evalutron 6000 human evaluations are located on the Audio Music Similarity and Retrieval Raw Data page.