Grand Challenge 2014: User Experience

Contents

Purpose

Holistic, user-centered evaluation of the user experience in interacting with complete, user-facing music information retrieval (MIR) systems.

Goals

- To inspire the development of complete MIR systems.

- To promote the notion of user experience as a first-class research objective in the MIR community.

Dataset

A set of music 10,000 music audio tracks is provided for the GC14UX. It will be a subset of tracks drawn from the Jamendo collection's CC-BY licensed works.

The Jamendo collection contains music in a variety of genres and moods, but is mostly unknown to most listeners. This will mitigate against the possible user experience bias induced by the differential presence (or absence) of popular or known music within the participating systems.

As of May 20, 2014, the Jamendo collection contains 14742 tracks with the CC-BY license. The CC-BY license allows others to distribute, modify, optimize and use your work as a basis, even commercially, as long as you give credit for the original creation. This is one of the most permissive licenses possible.

The 10,000 tracks in GC14UX will be sampled (w.r.t. maximizing music variety) from the Jamendo collection with CC-BY license and made available for participants (system developers) to download to build their systems.

Participating Systems

Unlike conventional MIREX tasks, participants are not asked to submit their systems. Instead, the systems will be hosted by their developers. All participating systems need to be constructed as websites accessible to users through normal web browsers. Participating teams will submit the URLs to their systems to the GC14UX team.

To ensure a consistent experience, evaluators will see participating systems in fixed size window: 1024x768. Please test your system for this screen size.

Potential Participants

Please put your names and email contacts in the following table. It is encouraged that you give your team a cool name!

| (Cool) Team Name | Name(s) | Email(s) |

|---|---|---|

| The MIR UX Master | Dr. MIR | mir@domain.com |

Evaluation

As written in the name of the Grand Challenge, the evaluation will be user-centered. All systems will be used by a number of human evaluators and be rated by them on several most important criteria in evaluating user experience.

Criteria

Note that the evaluation criteria or its descriptions may be slightly changed in the months leading up to the submission deadline, as we test it and work to improve it.

Given the GC14UX is all about how users perceive their experiences of the systems, we intend to capture the user perceptions in a minimally intrusive manner and not to burden the users/evaluators with too many questions or required data inputs. The following criteria are grounded on the literature of Human Computer Interaction (HCI) and User Experience (UX), with a careful consideration on striking a balance between being comprehensive and minimizing evalutors' cognitive load.

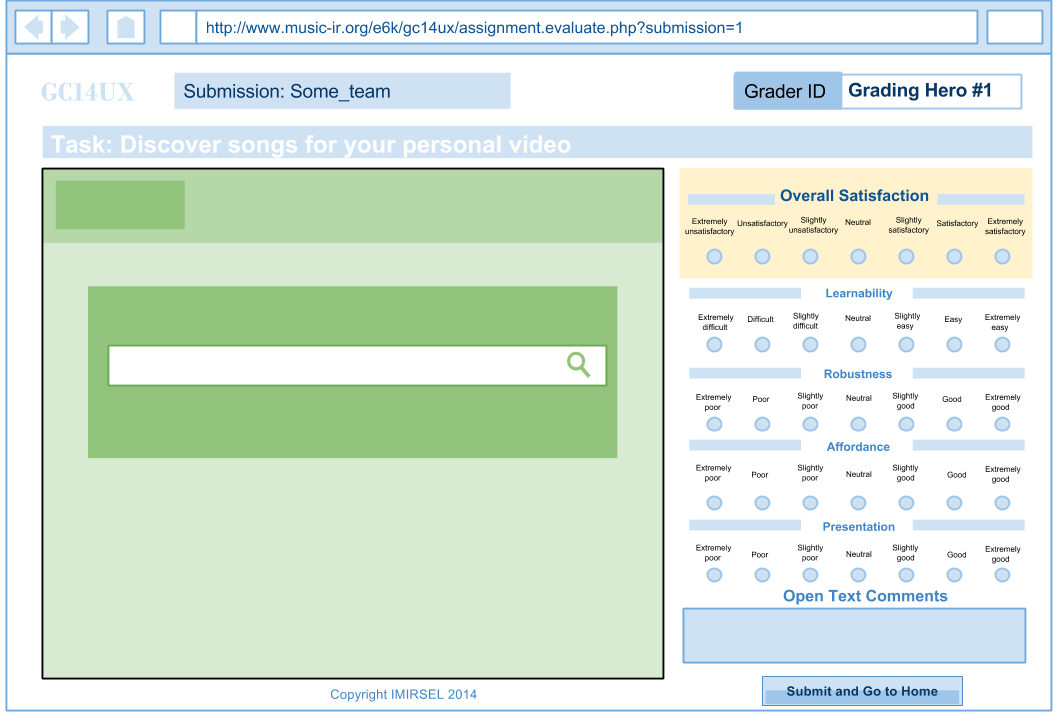

Evaluators will rate systems on the following criteria:

- Overall satisfaction: Overall, how pleasurable do you find the experience of using this system?

Very unsatisfactory / Unsatisfactory / Slightly unsatisfactory / Neutral / Slightly satisfactory / Satisfactory / Very satisfactory

- Learnability: How easy was it to figure out how to use the system?

Very difficult / Difficult / Slightly difficult / Neutral / Slightly easy / Easy / Very easy

- Robustness: How good is the system’s ability to warn you when you’re about to make a mistake and allow you to recover?

Very Poor / Poor / Slightly Poor / Neutral / Slightly Good / Good / Excellent ||| Not Applicable

- Affordances: How well does the system allow you to perform what you want to do?

Very Poor / Poor / Slightly Poor / Neutral / Slightly Good / Good / Excellent

- Presentation: How well does the system communicate what’s going on? (How well do you feel the system informs you of its status? Can you clearly understand the labels and words used in the system? How visible are all of your options and menus when you use this system?)

- Aesthetics: How beautiful is the design? (Is it aesthetically pleasing?)

Very Poor / Poor / Slightly Poor / Neutral / Slightly Good / Good / Excellent

- Feedback (Optional): An open-ended question is provided but is optional for evaluators to give feedback if they wish to do so.

Evaluators

Evaluators will be users aged 18 and above. For this round, evaluators will be drawn primarily from the MIR community through solicitations via the ISMIR-community mailing list. The #Evaluation Webforms developed by the GC14UX team will ensure all participating systems will get equal number of evaluators.

Task for evaluators

To motivate the evaluators, a defined yet open task is given to the evaluators:

You are creating a short video about a memorable occasion that happened to you recently, and you need to find some (copyright-free) songs to use as background music.

The task is to ensure that evaluators have a (more or less) consistent goal when they interact with the systems. The goal is flexible and authentic to the evalutors' lives ("a recent, memorable occasion"). As the task is not too specific, evaluators can potentially look for a wide range of music in terms of genre, mood and other aspects. This allows great flexibility and virtually unlimited possibility in system design.

Another important consideration in designing the task is the music collection available for this GC14UX: the Jamando collection. Jamando music is not well-known to most users/evaluators, whereas many more commonly seen music information tasks are more or less influenced by users' familiarity to the songs and song popularity. Through this task of "finding (copyright-free) background music for a self-made video", we strive to minimize the need of looking for familiar or popular music.

Evaluation results

Statistics of the scores given by all evaluators will be reported: mean, average deviation. Meaningful text comments from the evaluators will also be reported.

Evaluation Webforms

To facilitate the evaluators and minimize their burden, the GC14UX team will provide a set of evaluation forms which wrap around the participating systems. As shown in the following image, the evaluation webforms are for scoring the participating systems, with their client interfaces embedded within an iframe in the left side of the webform.

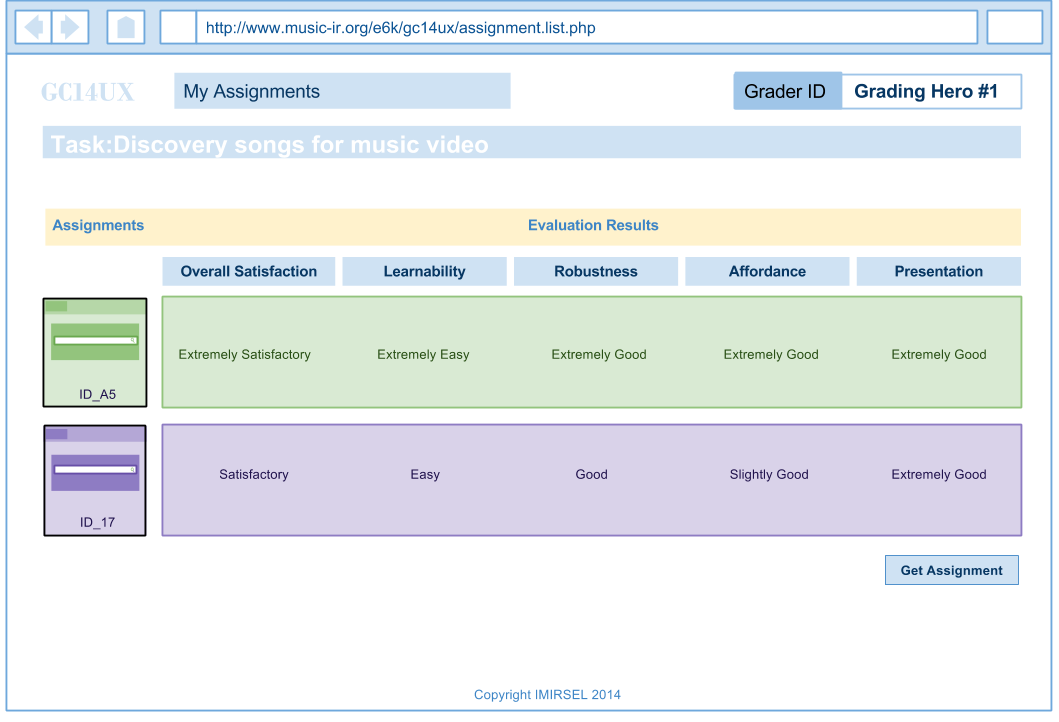

Users can task as many submissions as they want in the My Assignments page. They are also allowed to go back to the evaluation page anytime by clicking the thumbnail of the submission.

Organization

Important Dates

- July 1: announce the GC

- Sep. 21st: deadline for system submission

- Sep. 28th: start the evaluation

- Oct. 20th: close the evaluation system

- Oct. 27th: announce the results

- Oct. 31st: MIREX and GC session in ISMIR2014

What to Submit

A URL to the participanting system.

Contacts

The GC14UX team consists of:

- J. Stephen Downie, University of Illinois (MIREX director)

- Xiao Hu, University of Hong Kong (ISMIR2014 co-chair)

- Jin Ha Lee, University of Washington (ISMIR2014 program co-chair)

- Yi-Hsuan (Eric) Yang, Academic Sinica, Taiwan (ISMIR2014 program co-chair)

- David Bainbridge, Waikato University, New Zealand

- Kahyun Choi, University of Illinois

- Peter Organisciak, University of Illinois

Inquiries, suggestions, questions, comments are all highly welcome! Please contact Prof. Downie [1] or anyone in the team.